In endoscopy equipment projects, there is usually a strong urge to move quickly and ask the supplier to send a sample monitor right away. I understand that instinct. Teams want to see the image, touch the product, and get the evaluation moving. But in practice, a sample sent too early often creates more noise than useful judgment. What matters is not how fast the sample arrives, but whether the project has defined what that sample is supposed to prove.

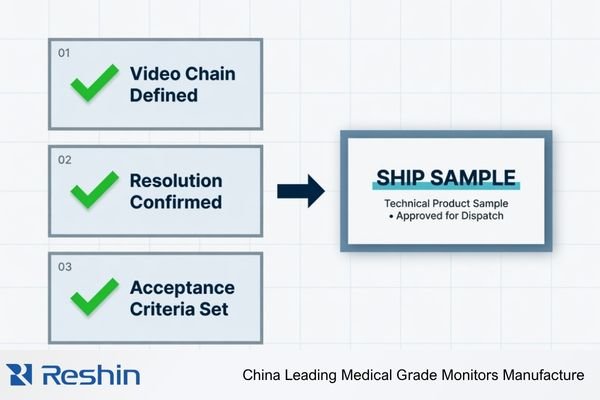

A meaningful sample review for endoscopy equipment starts only after the video chain is defined, the project direction is clear, and the acceptance criteria are written down. A sample test should validate system fit under realistic conditions, not just collect first impressions about image quality. A capable surgical monitor manufacturer should help the team confirm those conditions before the sample is shipped.

Before I recommend shipping a sample, I usually want the project team to lock four things first: the actual video chain, the real resolution direction, the validation goal, and the acceptance criteria. Without those boundaries, the test result can look decisive while still being misleading1. I have seen plenty of sample reviews where the screen looked good, but the signal path in the test setup was not the one the final equipment would use. In that situation, the feedback may sound positive, but it does not help the project move forward in a controlled way.

The best sample is rarely the one that looks the most impressive in the first ten minutes. The most useful sample is usually the one that helps the team verify the right assumptions under the right conditions. In our own project reviews, that is how we try to use sampling: not as a showroom moment, but as a controlled step in system validation.

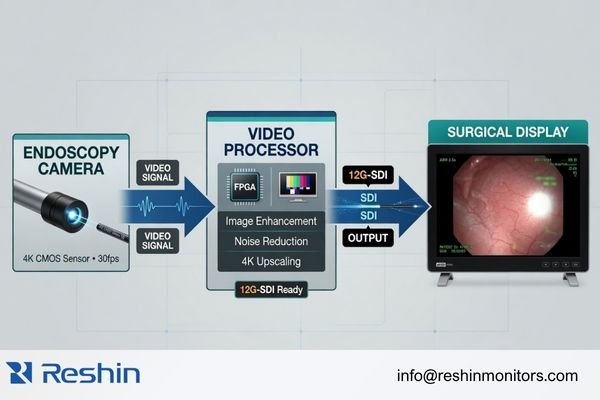

A Surgical Display Sample Only Becomes Meaningful After the Video Chain Is Defined

In endoscopy equipment projects, I often have to slow the conversation down before speeding it up. A sample tested without a clearly defined video chain can easily create the wrong conclusion.

A useful sample review has to follow a defined video chain: source, processor, output format, signal path, and any conversion in between. Only then can the sample confirm whether the display really fits the project instead of simply showing a good image in a temporary setup.

a sample only becomes valuable when it is tested against the real operating path2 rather than a convenient temporary setup.

a sample only becomes valuable when it is tested against the real operating path2 rather than a convenient temporary setup.

Why This Matters More Than the First Image Impression

A strong-looking image in a simplified setup can still be the wrong answer for the real system. I have seen samples perform well on a bench test and then behave differently once the actual processor, cable length, or switching path was introduced. That is why I treat the video chain as the first control point. When the path is defined, sample feedback becomes useful. When it is not, the team is often reacting to an image without really validating the system.

Buyers Should Confirm the Project Is Really FHD-Legacy or True 4K Before Requesting a Sample

A surprising number of sample reviews lose value because the project asks for the wrong tier of display from the beginning. Before anything else, I usually want to separate one question clearly: is this still an FHD-legacy project, or has it genuinely moved into a true 4K direction?

Sample value drops quickly when the requested tier does not match the actual system. Buyers should first confirm whether the processor and signal path are still FHD-led or already built for true 4K, and then request a sample that fits that direction.

If the processor path is still built around FHD outputs such as 3G-SDI, DVI, VGA, or older HDMI behavior, the sample should mainly prove compatibility, stable signal handling, and practical integration. In that situation, jumping too early to a 4K sample often adds confusion rather than value. The question is not whether a 4K screen looks better in theory. The question is whether the actual project is ready to validate that direction.

On the other hand, if the processor, source format, and signal path are already committed to true 4K, then only a real 4K endoscopy surgical display sample can tell the team whether the system is actually delivering the level of detail and performance it expects. In our project discussions, I usually treat this as the second control point after video-chain definition. Once the resolution direction is wrong, the rest of the sample review tends to drift with it.

Before Sample Testing, Buyers Need to Decide Whether They Are Validating Image Quality or System Fit

A lot of sample reviews become too subjective because the team never decides what the sample is actually there to validate. I often hear comments like “the image looks good,” but that is only one part of the job.

A sample may be used to evaluate image quality, but it should also be used to validate system fit. Those are related questions, but they are not the same question, and mixing them too early often leads to weak decisions.

For image quality, the team may care most about detail, glare behavior, color rendering, viewing comfort, and overall visibility under clinical lighting3. For system fit, the bigger issues are usually interface compatibility, signal recovery, mounting position, viewing distance, cable path, and cleanability. Both matter. The problem begins when a visually impressive sample is mistaken for an integration-ready answer.

| Validation Path | What the Sample Should Prove | Common Mistake |

|---|---|---|

| Image Quality Validation | Detail visibility, glare behavior, color and contrast impression, comfort during use | Letting one attractive image decide the whole sample outcome |

| System Fit Validation | Input compatibility, signal stability, mounting practicality, cleanability, workflow suitability | Assuming a screen that looks good will automatically fit the system well |

In our own review process, we usually separate these two checks on purpose. First we ask whether the display fits the signal path, mounting logic, and operating environment. Then we judge the viewing result inside that reality. That order saves time, and more importantly, it prevents a team from getting attached to the wrong sample for the wrong reason.

A Sample Test Without Acceptance Criteria Usually Creates Opinions, Not Decisions

From an engineering standpoint, the biggest problem in sample reviews is not that a sample fails. It is that the team finishes the review with several opinions and no decision.

Before the sample is shipped, the acceptance criteria should already be clear. Without defined boundaries for signal handling, physical fit, and expected behavior, even a positive sample review can remain too vague to support a project decision.

I usually encourage buyers to separate their criteria into three levels: what is required, what is acceptable with conditions, and what is not acceptable. That sounds simple, but it changes the quality of the review immediately4.

For example, the team should know which input formats must work without compromise, whether signal conversion is acceptable, what screen size and mounting method are allowed, whether multi-input display is needed, and how quickly the display should recover after signal changes. Just as important, the team should decide whether the mass-production version is expected to match the tested sample in form, fit, and function. In our own projects, this is usually where the conversation becomes much more practical, because the sample stops being a visual preference test and becomes a real go-or-no-go checkpoint.

Different Endoscopy Sample Directions Naturally Map to Different Representative Platforms

The purpose of this section is not to turn the article into a product list. The real point is to show that once the validation goal is clear, the sample tier usually becomes much easier to choose.

The table below works best as a sample-direction map, not as a ranking of models.

| Sample Direction / Validation Goal | What the Team Is Really Testing | Display Requirements | Recommended Model | Key Integration Considerations |

|---|---|---|---|---|

| Legacy / Basic Compatibility Sample | Whether the display can integrate reliably with older endoscopy processors and mixed legacy interfaces | Broad interface coverage, stable FHD behavior, dependable signal handling | MS247SA | Best suited to compatibility-led validation where legacy inputs and basic endoscopy integration matter most |

| Mainstream FHD Surgical Sample | Whether a standard FHD endoscopy system can achieve good image quality and practical OR fit | FHD resolution, stable signal lock, cleanable front design, practical mounting support | MS270P | Useful for mainstream endoscopy evaluation where image quality and routine system fit need to be reviewed together |

| True 4K Validation Sample | Whether an upgraded endoscopy system can really deliver true 4K workflow performance | Native 4K support, high-bandwidth interfaces, stronger optical and image-processing direction | MS275PA | Best when the processor path, source format, and project target are already committed to a real 4K direction |

FAQ About What to Confirm Before Sampling a Surgical Display for Endoscopy Equipment

Can buyers request a sample before confirming the processor output?

A sample can be requested, but I do not usually recommend serious evaluation before the processor output is clear. If the source format is still uncertain, the review result can be influenced by too many system variables and become less trustworthy.

Is sample testing mainly about checking image quality?

No. Image quality matters, but in endoscopy equipment projects a sample is also there to validate signal compatibility, stability, mounting practicality, cleanability, and overall system fit. Those points often decide more than the first visual impression.

Should a legacy FHD project jump directly to a 4K sample?

Not necessarily. I usually suggest checking whether the processor path and project target genuinely support 4K first. Otherwise, a 4K sample can distort the review instead of helping it.

Does one successful sample mean the project risk is already low?

No. A successful sample is only one stage result. The team still needs to confirm the specification tied to that sample, later production consistency, and how the supplier responds to technical feedback during the review.

The Best Sample Is Not the One That Looks Good First, but the One That Verifies the Right Project Assumptions

Before sampling, buyers should confirm four things clearly: the video chain, the real resolution direction, the validation goal, and the acceptance criteria. That is what turns a sample from a casual viewing exercise into a useful project decision.

For me, the most useful sample is usually not the one that looks the most impressive at first glance. It is the one that helps the team verify processor output, interface path, mounting logic, and later production direction under realistic conditions. In our own projects, that is how we try to handle sample reviews as well: first define what the sample must prove, then test it in a way that reflects the real system. If your team is preparing a sample evaluation for an endoscopy equipment project, the most useful next step is usually to align those conditions with a surgical monitor manufacturer before the sample is shipped.

✉️ info@reshinmonitors.com

🌐 Surgical Monitor Manufacturer

-

"Acceptance Criteria: Everything You Need to Know Plus Examples", https://resources.scrumalliance.org/Article/need-know-acceptance-criteria. Industry-standard testing frameworks emphasize that clearly defined acceptance criteria are essential to correctly interpret test results and avoid misleading conclusions. Evidence role: general_support; source type: institution. Supports: Without those boundaries, the test result can look decisive while still being misleading.. Scope note: Applies broadly to testing methodologies and may not address video chain specifics. ↩

-

"Guide to Standard HD Digital Video Measurements – Tektronix", https://www.tek.com/tw/documents/primer/guide-standard-hd-digital-video-measurements. Industry standards bodies like SMPTE advise that display samples be evaluated within their intended signal chain to accurately assess performance under operational conditions. Evidence role: expert_consensus; source type: institution. Supports: a sample only becomes valuable when it is tested against the real operating path rather than a convenient temporary setup.. Scope note: Recommendations are based on broadcast and professional video standards and may vary for other contexts. ↩

-

"Part A: Performance evaluation and performance studies", https://de-mdr-ivdr.tuvsud.com/Part-A-Performance-evaluation-and-performance-studies.html. International standards for medical imaging displays (e.g., IEC 62563-1) specify key image quality criteria—such as spatial resolution (detail visibility), glare management, color fidelity, luminance uniformity (viewing comfort), and performance under clinical lighting. Evidence role: definition; source type: institution. Supports: For image quality, the team may care most about detail, glare behavior, color rendering, viewing comfort, and overall visibility under clinical lighting.. Scope note: Applies specifically to medical imaging workstation displays; other clinical settings may have additional or different criteria. ↩

-

"Using the MoSCoW Method to Prioritize Projects – ProjectManager", https://www.projectmanager.com/training/prioritize-moscow-technique. The MoSCoW prioritization technique groups requirements into must-haves, should-haves, could-haves, and won’t-haves, and has been shown in software and project management contexts to enhance decision clarity and review effectiveness. Evidence role: mechanism; source type: encyclopedia. Supports: separate their criteria into three levels: what is required, what is acceptable with conditions, and what is not acceptable. That sounds simple, but it changes the quality of the review immediately.. Scope note: Originally developed for software project management; direct impact on hardware procurement reviews may vary. ↩